Brief description of the problem

I’m trying to migrate to Rockstor 4.1 (OpenSuse). That’s not working (freezing during install on HP Proliant Microserver N54L AMD Turion), and including nomodeset kernel option has not made any difference.

Before prodding around some more (and no doubt needing help for that) I thought I’d reinstate the previous install.

This hasn’t worked.

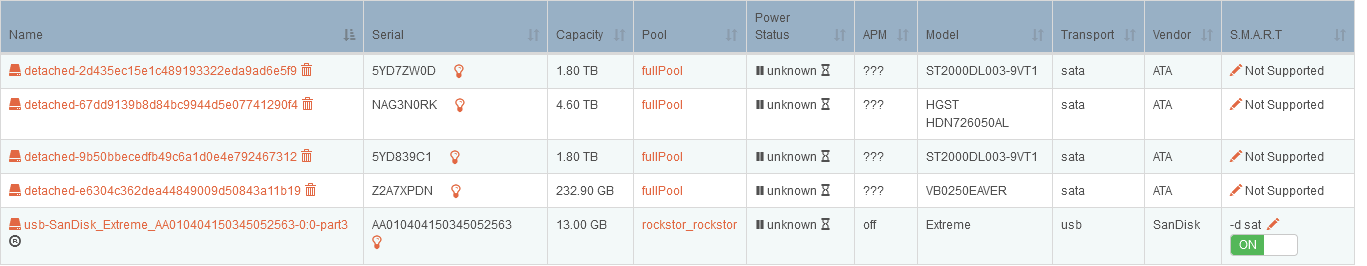

The system boots up and sees the data discs (but SMART is not supported - I believe it used to be), but the pool is not mounted. The shares are visible but empty. (Of course if I could get the pool mounted in the V4.1 system, this wouldn’t be a problem.)

Detailed step by step instructions to reproduce the problem

- Attempt to install Rockstor V4.1 (with data discs unplugged)

- Give up after several attempts to install e.g. ctrl+alt+del before prompt for select locale

- Revert to previous boot disc 3.9.1-16

- System fails to see pool - discs are there but the pool fails to mount.

Note: although the data discs were unplugged before any attempt to install, they were plugged in when booting into a version of grub which did not enter the installer i.e. found nothing for grub to pass control to.

Web-UI screenshot

Error Traceback provided on the Web-UI

Traceback (most recent call last):

File "/opt/rockstor/src/rockstor/rest_framework_custom/generic_view.py", line 41, in _handle_exception

yield

File "/opt/rockstor/src/rockstor/storageadmin/views/pool_balance.py", line 47, in get_queryset

self._balance_status(pool)

File "/opt/rockstor/eggs/Django-1.8.16-py2.7.egg/django/utils/decorators.py", line 145, in inner

return func(*args, **kwargs)

File "/opt/rockstor/src/rockstor/storageadmin/views/pool_balance.py", line 72, in _balance_status

cur_status = balance_status(pool)

File "/opt/rockstor/src/rockstor/fs/btrfs.py", line 1064, in balance_status

mnt_pt = mount_root(pool)

File "/opt/rockstor/src/rockstor/fs/btrfs.py", line 283, in mount_root

'Command used %s' % (pool.name, mnt_cmd))

Exception: Failed to mount Pool(fullPool) due to an unknown reason. Command used ['/bin/mount', u'/dev/disk/by-label/fullPool', u'/mnt2/fullPool']