@tonyhr0x Hello again, and thanks for the added info. Should help folks chip in with more assistance.

Re:

That is actually the kernel version. But it’s enough to know roughly the underlying base OS version. It comes from the following command:

rleap15-3:~ # uname -a

Linux rleap15-3 5.3.18-150300.59.106-default #1 SMP Mon Dec 12 13:16:24 UTC 2022 (774239c) x86_64 x86_64 x86_64 GNU/Linux

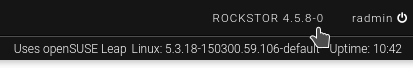

The Rockstor version is displayed in the very top-right of the Web-UI:

So there it’s 4.5.8-0 our latest testing channel version:

Plus we have:

Which suggests you are on what is now an End Of Life (EOL) base OS (openSUSE Leap) version.

As to the cause of the problems you are having, the following is suspicious:

Rather suggests that your system disk is not well. And has likely gone read-only. Btrfs will often force a pool into a read-only state if problems are found. Or this may be a read error from the disk itself.

This command will tell us if btrfs itself has seen disk errors under the “/” mount which is “ROOT” labeled/name pool:

btrfs dev stats /

The output should be something like:

[/dev/sda4].write_io_errs 0

[/dev/sda4].read_io_errs 0

[/dev/sda4].flush_io_errs 0

[/dev/sda4].corruption_errs 0

[/dev/sda4].generation_errs 0

But your system drive is different as here myine is sda but you get the idea.

Another command to try and get some info here is this one:

rleap15-3:~ # mount | grep " / "

/dev/sda4 on / type btrfs (rw,noatime,space_cache,subvolid=258,subvol=/@/.snapshots/1/snapshot)

Note on my example system the output of that mount command then displayed rw this is what is expected.

Just trying to get more info on what may have happened here.

Upgrading from 15.3 to 15.4 in-situation is possible but not if your system disk is poorly.

Lets see if those commands have anything to tell us.

Hope that helps. If only to help others here chip-in with what may have happened.