Hi Everyone.

So my journey to Rockstor continues. I was coming from OMV 5 and was testing Rockstor 4.0.4 in a VM and ended up going with the stable subscription. I was once again able to re-register a new appliance ID with appman when I moved to my real world NAS from the VM and once backed up, nuke & paved with Rockstor.

Since I was running everything in docker container for the most part to begin with, migration was pretty straight forward and most of the differences I encountered during migration was due to distribution and it’s tools (I had to learn zypper + friends, coming from apt.) I’m pleased to say I was able to get my old “infrastructure” up and in short order under Rockstor.

Couple of things I changed/skewed from stock:

- Installed Dockstarter. This was painless and works just as it did for me under OMV. It even updated docker-compose in the process. Quickly re-deployed all my containers.

- Installed the Portainer “rock on” to manage all of this. UI is directly linkable via RockUI.

Some questions:

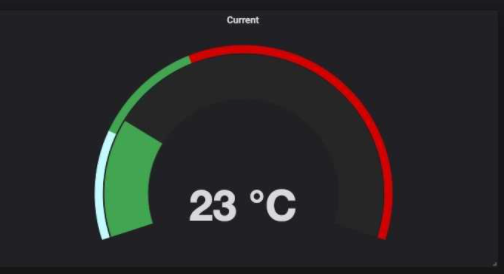

- There’s some sort of metrics tool running, I think it’s data-collector and I assume it’s used for the dashboard. Does this facility have the capability of output to influxdb? Under OMV it was the collectd daemon which supports this but if I can leverage existing tools, awesome. I output this data in Grafana to get nice pretty dashboards about my NAS. edit I wrote some shell scripts to directly inject data into influxdb so I assume it’d be somewhat trivial or I can read this data from shell and do it myself?

- On Sourceforge where you can download the ISO from, there’s a 4.0.5 directory with a tarball with what appears to be the update/patch. How does this install? I built 4.0.4 using the installer method.

Cons?:

- Certificate interface needs way more polish. It accepted my SSL cert but even after install, I don’t know what/if it’s installed.

- Seems only /opt/rockstor/var/log/rockstor.log seems to contain any logging (and thin unfortunately) and I have to determine runtime status/logging using systemctl status. Can /var/log/syslog be enabled/turned on? Makes troubleshooting easier. edit resolved by installing rsyslog and enabling all messages in /etc/rsyslog.conf

Otherwise, it’s been a pretty painless transition with most of the growing pains were around me reading the openSUSE documentation on how to use their tools. My NAS isn’t super-awesome or anything but it does host:

- Emby

- transmission

- Influxdb

- mariadb

- pybtble

- portainer

- homeassistant

- openzwave

- mosquitto

- heimdall

- nodered

- grafana

The NAS operates my home automation (and my family was relieved the transition Dad was doing went so smoothly) and various other services for my family.

Overall, I’m quite pleased and the journey continues.