Hi,

this is still on the non-OpenSUSE version of Rockstor (version: 3.9.2-57). A few days ago I took some updates which included nginx among other things I believe.

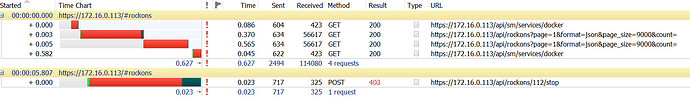

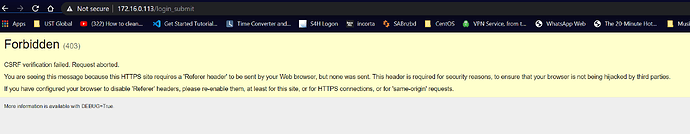

Now I have noticed that I cannot stop RockOns via the WebUI. I can flip the switch, but the usual spinning cog does not appear. Neither can I stop the RockOn Service, nor when I hit the Update button for the RockOn directory anything happens (the blue refresh logo pops up for about a second) and then disappears.

I’ve looked at items like this one: Plex not stoppable

but I don’t see anything in the logs like this. The only message (and that’s why I pointed out nginx as one of the update packages) is this

DoesNotExist: NetworkConnection matching query does not exist.

[07/Oct/2020 16:08:34] ERROR [storageadmin.util:40] (Error while processing Rock-on profile at (http://rockstor.com/rockons/NginxProxyManager.json).). Lower level exception: (HTTPConnectionPool(host='rockstor.com', port=80): Max retries exceeded with url: /rockons/NginxProxyManager.json (Caused by <class 'socket.error'>: [Errno 4] Interrupted system call)).

[07/Oct/2020 16:08:34] ERROR [storageadmin.util:44] Exception: HTTPConnectionPool(host='rockstor.com', port=80): Max retries exceeded with url: /rockons/NginxProxyManager.json (Caused by <class 'socket.error'>: [Errno 4] Interrupted system call)

Traceback (most recent call last):

File "/opt/rockstor/src/rockstor/rest_framework_custom/generic_view.py", line 41, in _handle_exception

yield

File "/opt/rockstor/src/rockstor/storageadmin/views/rockon.py", line 440, in _get_available

cur_res = requests.get(cur_meta_url, timeout=10)

File "/opt/rockstor/eggs/requests-1.1.0-py2.7.egg/requests/api.py", line 55, in get

return request('get', url, **kwargs)

File "/opt/rockstor/eggs/requests-1.1.0-py2.7.egg/requests/api.py", line 44, in request

return session.request(method=method, url=url, **kwargs)

File "/opt/rockstor/eggs/requests-1.1.0-py2.7.egg/requests/sessions.py", line 279, in request

resp = self.send(prep, stream=stream, timeout=timeout, verify=verify, cert=cert, proxies=proxies)

File "/opt/rockstor/eggs/requests-1.1.0-py2.7.egg/requests/sessions.py", line 374, in send

r = adapter.send(request, **kwargs)

File "/opt/rockstor/eggs/requests-1.1.0-py2.7.egg/requests/adapters.py", line 209, in send

raise ConnectionError(e)

The RockOns that are running, seem to be running fine, and I know I can obviously stop them using the command line, but was curious whether anybody else is encountering this. Yesterday, I did reboot the server because I wanted the Plex RockOn updated, and since the normal stop/start process didn’t work I assumed, that I might just do a reboot, but that obivously did not address the issue.

Here’s the yum history from that update:

Transaction performed with:

Installed rpm-4.11.3-43.el7.x86_64 @base

Installed yum-3.4.3-167.el7.centos.noarch @base

Installed yum-plugin-fastestmirror-1.1.31-54.el7_8.noarch @updates

Packages Altered:

Updated nginx-1:1.16.1-1.el7.x86_64 @epel

Update 1:1.16.1-2.el7.x86_64 @epel

Updated nginx-all-modules-1:1.16.1-1.el7.noarch @epel

Update 1:1.16.1-2.el7.noarch @epel

Updated nginx-filesystem-1:1.16.1-1.el7.noarch @epel

Update 1:1.16.1-2.el7.noarch @epel

Updated nginx-mod-http-image-filter-1:1.16.1-1.el7.x86_64 @epel

Update 1:1.16.1-2.el7.x86_64 @epel

Updated nginx-mod-http-perl-1:1.16.1-1.el7.x86_64 @epel

Update 1:1.16.1-2.el7.x86_64 @epel

Updated nginx-mod-http-xslt-filter-1:1.16.1-1.el7.x86_64 @epel

Update 1:1.16.1-2.el7.x86_64 @epel

Updated nginx-mod-mail-1:1.16.1-1.el7.x86_64 @epel

Update 1:1.16.1-2.el7.x86_64 @epel

Updated nginx-mod-stream-1:1.16.1-1.el7.x86_64 @epel

Update 1:1.16.1-2.el7.x86_64 @epel

Any suggestions?

Thanks.

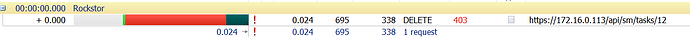

which is right after I finished replacing all of the disks. I guess, the last device delete didn’t complete correctly and has been trying periodically to execute it again …

which is right after I finished replacing all of the disks. I guess, the last device delete didn’t complete correctly and has been trying periodically to execute it again …