Hi folks,

Hopefully someone can shed some light or assist on an issue I’m experiencing after adding 2 more disks to my server, and expanding the pool & changing raid from raid1 to raid5.

So far I’ve completed two balances, however still ending up with no new space, and the new disks (sdd and sde) are showing 100% allocated after being added & balanced on raid5.

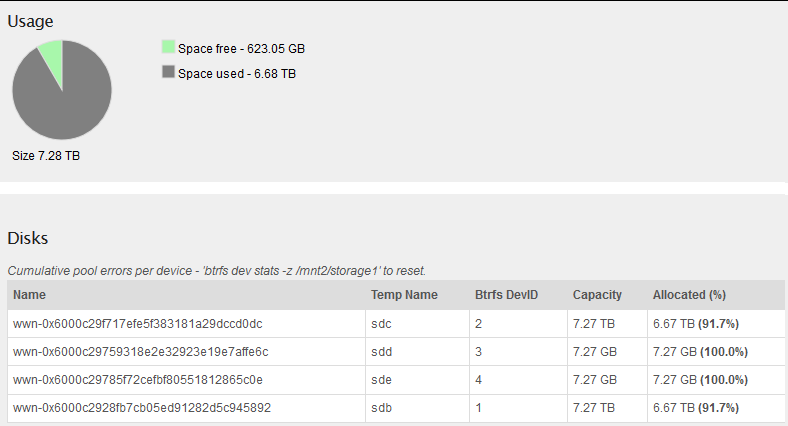

The server consists of 4x8TB disks, and the storage pool looks like the below:

As you can see, it’s showing 100% allocated on the new disks.

Have run a few commands on the box to get a bit more info, however I’m very unskilled with btrfs:

[root@storage ~]# btrfs fi usage /mnt2/storage1/

WARNING: RAID56 detected, not implemented

WARNING: RAID56 detected, not implemented

WARNING: RAID56 detected, not implemented

Overall:

Device size: 14.55TiB

Device allocated: 0.00B

Device unallocated: 14.55TiB

Device missing: 0.00B

Used: 0.00B

Free (estimated): 0.00B (min: 8.00EiB)

Data ratio: 0.00

Metadata ratio: 0.00

Global reserve: 512.00MiB (used: 288.00KiB)

Data,RAID5: Size:6.67TiB, Used:6.67TiB

/dev/sdb 6.66TiB

/dev/sdc 6.66TiB

/dev/sdd 7.27GiB

/dev/sde 7.27GiB

Metadata,RAID5: Size:8.00GiB, Used:7.00GiB

/dev/sdb 8.00GiB

/dev/sdc 8.00GiB

System,RAID5: Size:32.00MiB, Used:960.00KiB

/dev/sdb 32.00MiB

/dev/sdc 32.00MiB

Unallocated:

/dev/sdb 615.18GiB

/dev/sdc 615.18GiB

/dev/sdd 1.04MiB

/dev/sde 1.04MiB

[root@storage ~]# btrfs device usage /mnt2/storage1/

/dev/sdb, ID: 1

Device size: 7.27TiB

Device slack: 0.00B

Data,RAID5: 6.65TiB

Data,RAID5: 7.27GiB

Metadata,RAID5: 8.00GiB

System,RAID5: 32.00MiB

Unallocated: 615.18GiB

/dev/sdc, ID: 2

Device size: 7.27TiB

Device slack: 0.00B

Data,RAID5: 6.65TiB

Data,RAID5: 7.27GiB

Metadata,RAID5: 8.00GiB

System,RAID5: 32.00MiB

Unallocated: 615.18GiB

/dev/sdd, ID: 3

Device size: 7.27GiB

Device slack: 0.00B

Data,RAID5: 7.27GiB

Unallocated: 1.04MiB

/dev/sde, ID: 4

Device size: 7.27GiB

Device slack: 0.00B

Data,RAID5: 7.27GiB

Unallocated: 1.04MiB

[root@storage ~]# btrfs fi show

Label: 'rockstor_rockstor' uuid: 4dcbf3a9-b916-42e7-b142-9efc22dfb685

Total devices 1 FS bytes used 2.28GiB

devid 1 size 17.51GiB used 14.04GiB path /dev/sda3

Label: ‘storage1’ uuid: 0721fd63-fc56-442e-b1d5-dd3128978845

Total devices 4 FS bytes used 6.67TiB

devid 1 size 7.27TiB used 6.67TiB path /dev/sdb

devid 2 size 7.27TiB used 6.67TiB path /dev/sdc

devid 3 size 7.27GiB used 7.27GiB path /dev/sdd

devid 4 size 7.27GiB used 7.27GiB path /dev/sde

Sorry for the lack of information here, I’m a little out of my depth - hopefully someone can shed some light into what’s going on - happy to supply any other information I can!

Thanks in advance,

Shaun